Shortly after deploying our A.I. module, an anomaly was detected. The tool, designed to assist utilities to optimize inspections through machine learning, is using a classification model to separate ‘good’ poles from ‘bad’ ones. This can then be used to predict which (un-inspected) poles should be inspected next, saving time and money, preventing outages etc.

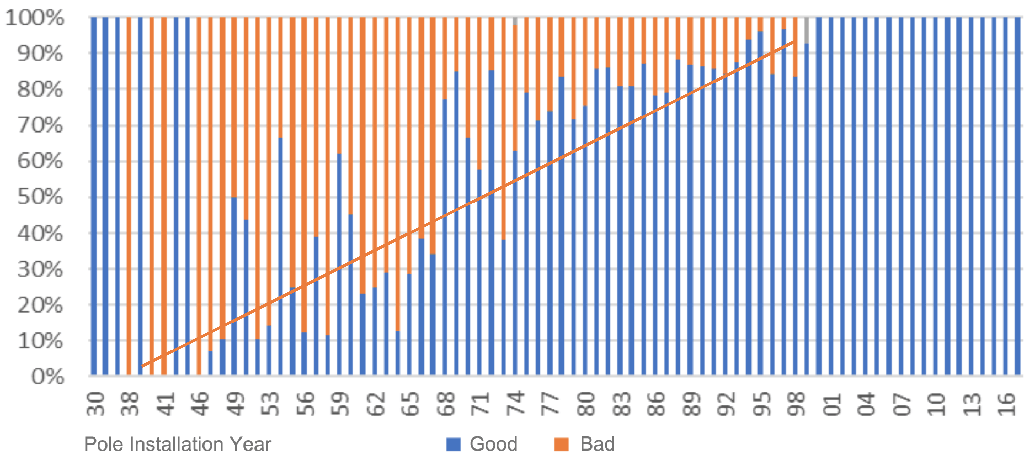

In an obvious example, old poles are more likely to be bad then newer ones. See image below. Thus, old poles should be inspected first. So far so good, we probably don’t need A.I. to tell us that.

But what the A.I. found was way more mysterious, a disturbance in the force if you will ;)

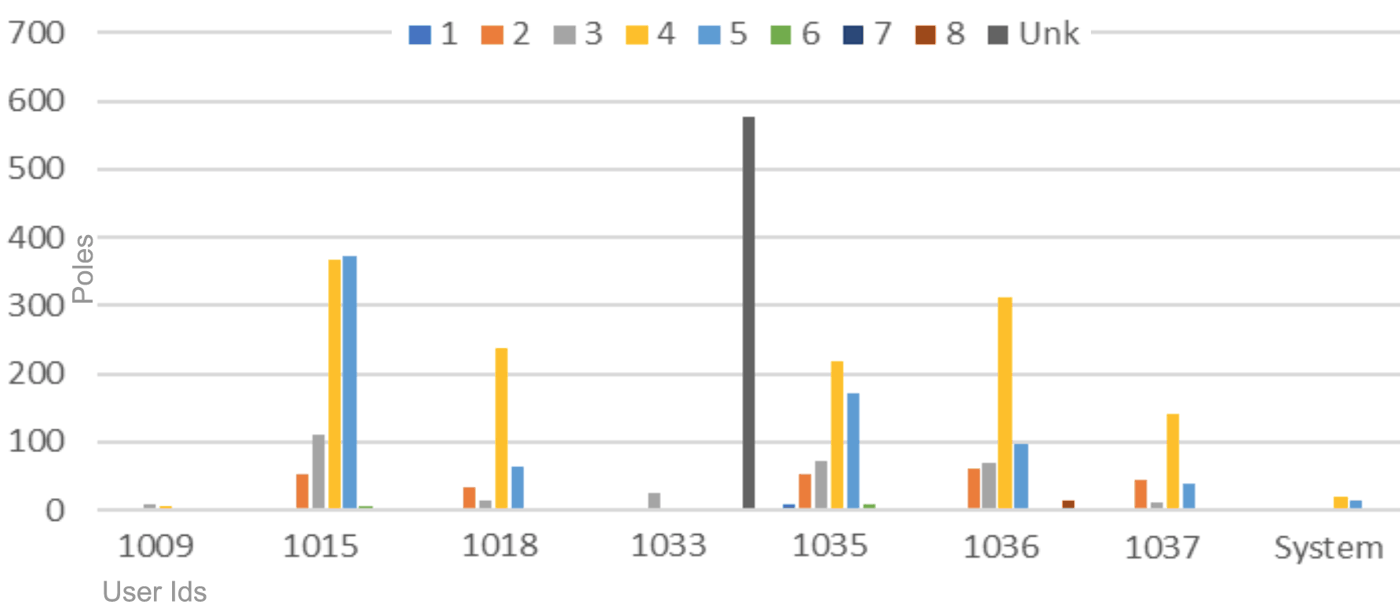

Typically, you expect each inspection crew to find some bad poles and some good ones. See the image below. Each color represents a different kind of pole damage. But the anomaly detection algorithm found an outlier, tracing back to one particular user, 1033. The other users have a nice distribution of different kind of pole damage, but user 1033 does not. In fact, all poles are ‘unknown’, which means ‘no known damage’. Given the other users results, it is hard to imagine that this user just happened to find perfect poles. Instead, it suggests they haven’t been inspected yet.

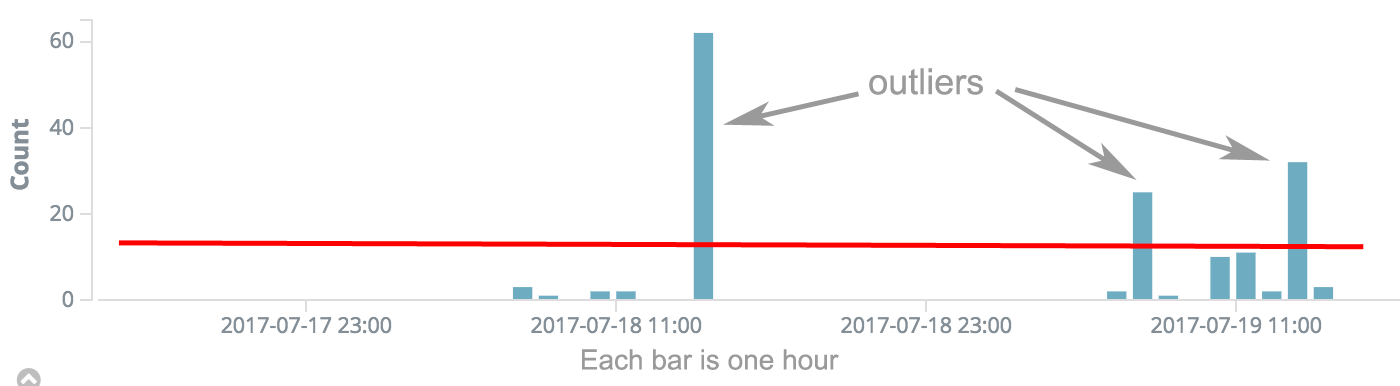

Further investigation showed that most of the ‘work’ occurred in short bursts, and not evenly spread out over time. See image below. Normally, an inspector drives to a pole, performs the inspections and moves to the next one, with a time-between-poles of 3-10 minutes, based on pole type, geography etc.

So what happened was that one inspector went rogue. The ‘rogue one’ simply marked the inspections as being done without actually doing the work.

Needless to say, the rogue one is no longer employed with our client. Also needless to say, the A.I. will stay.

Stay tuned for more A.I. related content. In the meantime, contact us to see what we can do with your data.